AI market forecast 2026 searches usually come from the same place: you need a usable U.S. outlook for budgets, product bets, or staffing plans, but most “forecasts” read like hype with a few charts sprinkled on top.

This piece treats 2026 as a planning horizon, not a fortune-telling exercise. You will get a practical artificial intelligence market outlook 2026 for the U.S., the demand signals that matter, and a way to translate trend talk into enterprise decisions.

One quick expectation reset: market sizing and revenue forecasts can differ widely because analysts define “AI” differently, count different layers of the stack, and assume different adoption curves. So instead of anchoring on a single number, the better move is to use scenario ranges and track the leading indicators that shift those ranges.

What’s really driving U.S. AI demand into 2026

For most U.S. companies, 2026 growth does not hinge on whether AI is “important.” That argument is already settled. The swing factor is whether deployments move from pilots into workflows that survive audits, cost reviews, and quarterly roadmaps.

Here are the demand drivers that show up repeatedly when you look at US AI industry growth projections 2026 through an operator lens:

- Cost pressure + productivity mandates: CFOs want measurable impact, not just experimentation. Automation, assisted service, and code productivity remain common entry points.

- Data gravity: Firms with cleaner data pipelines move faster, everyone else pays “data tax” before benefits appear.

- Security and compliance requirements: Controls around data leakage, model access, and logging often decide whether genAI goes wide.

- Vendor consolidation: Many orgs reduce tool sprawl, which shifts spend toward fewer platforms with stronger governance.

- Talent constraints: Teams avoid architectures that require rare skills to operate; managed services and simpler stacks win deals.

2026 market outlook: three scenarios you can plan around

When teams ask for an AI market size estimate US 2026, they usually want certainty for procurement and headcount. In reality, it is more useful to plan with scenarios tied to measurable triggers, then adjust quarterly.

According to OECD guidance on measuring AI, definitions and measurement approaches can materially change reported totals, which is why scenario planning tends to be safer than betting on one headline number.

Use this simple framework for the AI spending forecast in the United States 2026:

- Conservative scenario: AI expands, but budget growth slows; more scrutiny on ROI; more “pause and standardize” moves.

- Base scenario: Steady enterprise rollouts in customer ops, developer tooling, analytics modernization; governance becomes a buying requirement.

- Upside scenario: Faster deployment of genAI copilots and automation, stronger model performance per dollar, and clearer compliance patterns.

Key point: the difference between these scenarios often comes down to two things: unit economics (compute, licensing, labor) and risk controls (security, privacy, regulatory readiness). When those improve, adoption accelerates.

Machine learning vs. generative AI: what changes by 2026

In many roadmaps, “AI” gets treated like one category. It is not. Machine learning market trends 2026 and genAI adoption behave differently in procurement, implementation, and value realization.

Machine learning (ML) in 2026: quieter, but embedded

- Pattern: ML continues to hide inside fraud detection, demand forecasting, pricing, maintenance, and risk scoring.

- Buying motion: platform standardization and MLOps maturity matter more than “new model” headlines.

- Success metric: uplift on a KPI with stable monitoring and drift management.

Generative AI in 2026: broader surface area, tougher governance

- Pattern: copilots expand into sales, support, finance ops, HR, and engineering.

- Buying motion: security review, legal review, and data handling determine time-to-value.

- Success metric: task throughput, cycle time reduction, and consistent quality under real-world prompts.

When people ask for generative AI revenue projections 2026, it helps to separate “vendor revenue” from “enterprise value.” Vendor revenue depends on pricing models and packaging; enterprise value depends on workflow redesign and adoption, which is slower but stickier.

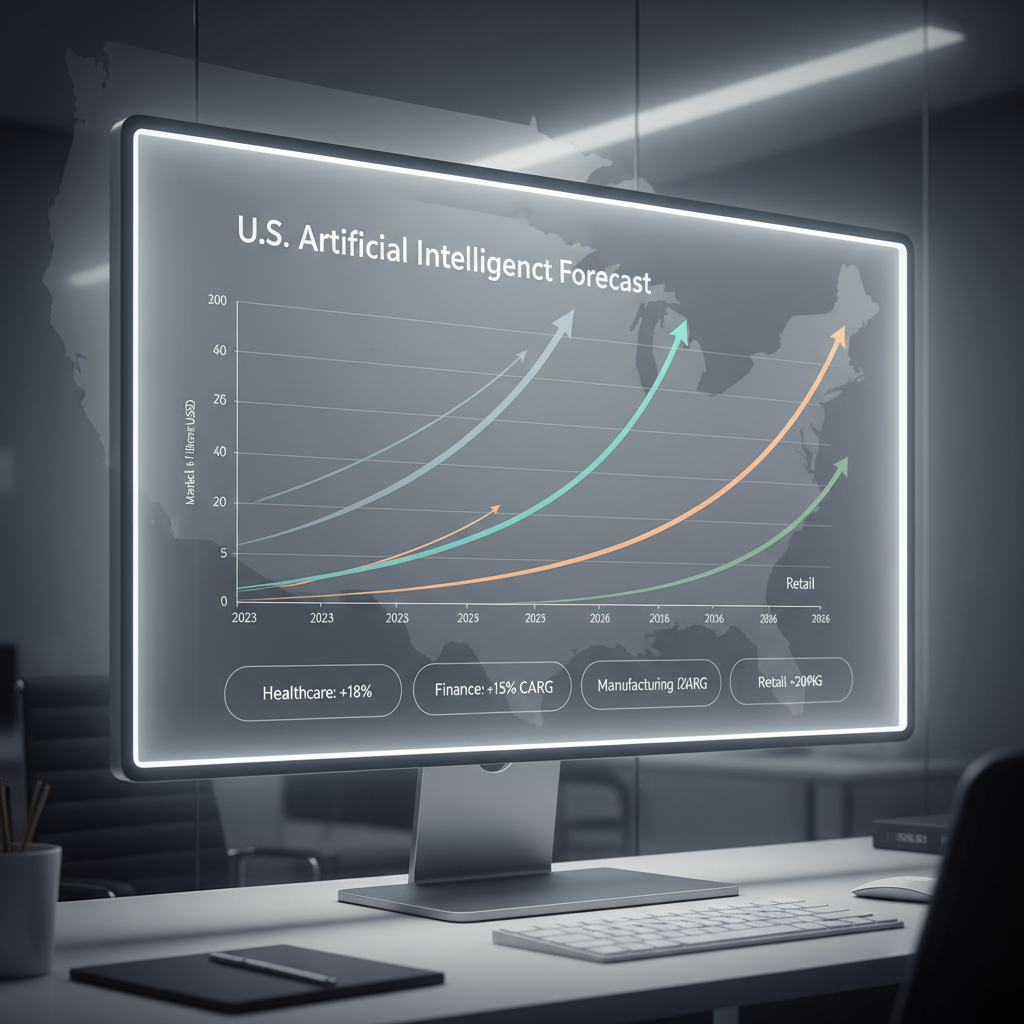

Sector-wise AI growth forecast 2026: where adoption tends to concentrate

A sector view is useful because the constraint is rarely “interest.” It is data sensitivity, integration complexity, and who owns the workflow. A realistic sector-wise AI growth forecast 2026 looks like this:

| Sector | Likely 2026 focus areas | Common blockers |

|---|---|---|

| Financial services | Fraud, AML assistance, customer support, developer productivity | Model risk management, audit trails, vendor governance |

| Healthcare | Documentation support, scheduling, revenue cycle, imaging workflows | Privacy controls, integration with EHR, clinical validation needs |

| Retail & eCommerce | Personalization, merchandising, service automation, demand planning | Data fragmentation, thin margins, change management |

| Manufacturing | Predictive maintenance, quality inspection, supply planning | OT/IT integration, edge deployment, sensor data quality |

| Media & marketing | Content ops, creative iteration, audience insights | IP risk, brand safety, measurement standards |

According to NIST, trustworthy AI hinges on governance topics like transparency, privacy, and robustness; in regulated or high-liability sectors, those requirements frequently slow rollouts but also create moats for teams that implement them well.

How to estimate U.S. enterprise AI adoption rates in 2026 (without guessing)

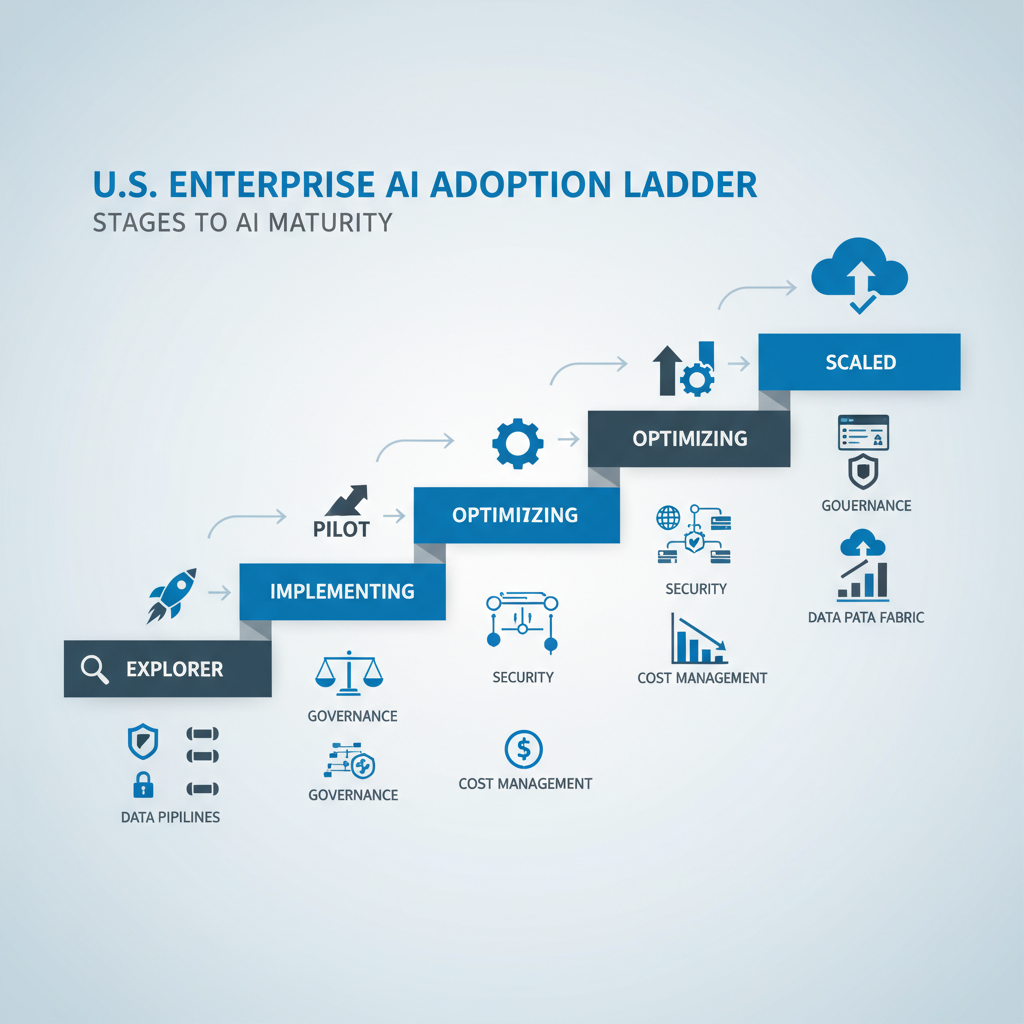

People want clean percentages for AI adoption rates in US enterprises 2026, but adoption is not a single moment. It is a ladder: experimenting, piloting, deploying in one function, then scaling across functions with shared controls.

Use this quick self-check to classify where your organization sits today, then project one level forward for 2026 rather than five levels forward.

- Explorer: lots of demos, a few sandbox proofs, limited production usage.

- Pilot-heavy: multiple pilots with mixed success, unclear platform strategy.

- Early scaler: a handful of production workflows, central governance forming, budgeting gets real.

- Scaled: shared platform, strong monitoring, clear cost controls, repeatable deployment patterns.

Reality check: if you are still debating data access rules, retention policies, and who owns model monitoring, scaling by 2026 is possible, but it usually requires central enablement plus executive backing, not just enthusiastic teams.

AI investment trends in the U.S. for 2026: where budgets typically land

In practice, AI investment trends US 2026 cluster into a few spend buckets. If you are doing enterprise AI budget planning 2026, this breakdown helps you avoid the classic mistake of funding models while starving everything around them.

- Data foundation: pipelines, quality, cataloging, permissions, and data products.

- Compute and inference: cloud commitments, accelerators, serving infrastructure, latency optimization.

- Platform and tooling: MLOps, LLMOps, evaluation harnesses, prompt management, observability.

- Security and governance: access control, audit logs, red-teaming, policy enforcement.

- Change management: training, workflow redesign, enablement, internal comms.

If you only fund licenses, you might get a short-lived burst of usage and then a quiet backlash when costs show up or risk teams intervene. This is where many “AI spending forecast in the United States 2026” conversations get messy inside companies.

Practical playbook: turning the 2026 forecast into decisions

Here is a grounded way to use the AI market forecast 2026 in planning, without pretending you can predict every twist.

1) Pick a small set of leading indicators

- Unit cost trend: inference cost per task, compute per workflow, or cost per resolved ticket.

- Adoption depth: weekly active users in target roles, not just “licenses assigned.”

- Quality and risk metrics: hallucination rate by category, PII leakage incidents, policy violations.

- Delivery velocity: time from idea to production for a new use case.

2) Budget in layers, not as a single AI line item

- Commit a base run-rate for platform, governance, and training.

- Hold a flexible pool for high-performing use cases, release it when KPIs clear thresholds.

- Negotiate vendor terms that match uncertainty, especially usage-based pricing where spikes can surprise teams.

3) Build a “use-case portfolio,” then prune aggressively

- Keep: workflows with clear owners, measurable outcomes, and controllable data exposure.

- Pause: ideas that require perfect data or cross-team cooperation that never materializes.

- Kill: anything that cannot pass basic evaluation or fails security reviews repeatedly.

According to FTC guidance on AI-related claims, organizations should avoid overstating what AI can do and should ensure claims are supported; in a planning context, that translates into disciplined internal measurement, not just vendor promises.

Common mistakes that distort 2026 planning

These come up often when teams translate an artificial intelligence market outlook 2026 into internal plans:

- Confusing pilots with adoption: a successful demo does not mean the workflow survives compliance and cost review.

- Ignoring integration work: the value sits in systems of record and systems of action, not in the model alone.

- Underinvesting in evaluation: without testing and monitoring, teams cannot prove improvement or detect regressions.

- Assuming one model fits all: some tasks want small, fast models; others need larger context and tools.

- Skipping policy design: if employees do not know what is allowed, adoption becomes either reckless or frozen.

When you should bring in outside expertise

Many teams can handle early rollouts internally, but outside help can be worth it when risk or complexity spikes. Consider it if:

- You need a formal governance program (policy, controls, audit readiness) across business units.

- You operate in heavily regulated environments and require model risk management alignment.

- Your costs are volatile and you need architecture changes to stabilize inference spend.

- You are selecting vendors and want an independent view of tradeoffs and lock-in risk.

If your use case touches sensitive personal data or safety-critical decisions, it is wise to involve legal, security, and domain specialists; requirements vary by industry and jurisdiction, so professional review often saves time later.

Conclusion: what to do next with your 2026 outlook

The most useful takeaway from any AI market forecast 2026 is not a single market number, it is a clearer plan for where your organization will place bets and how you will measure them. If you choose scenarios, track leading indicators, and fund the non-glamorous layers like governance and evaluation, 2026 planning starts to feel a lot less like guessing.

Next steps: pick two high-impact workflows to scale, define success metrics and risk thresholds now, then revisit your scenario assumptions every quarter based on cost and adoption signals.

Key takeaways

- Plan with scenarios rather than anchoring on one headline forecast.

- Separate ML and genAI in budgets and governance because adoption mechanics differ.

- Sector constraints matter; regulation and integration complexity often set the pace.

- Budget in layers so platforms, security, and change management do not become bottlenecks.

FAQ

- What is the most realistic way to use an AI market forecast 2026 for budgeting?

Use it to set scenario ranges and decision triggers, not to justify a fixed number. Tie funding releases to adoption and unit-cost metrics. - How should I interpret US AI industry growth projections 2026 if analysts disagree?

Check what each forecast includes in “AI” and what layer of the stack it measures. Differences often come from scope, not from one being “wrong.” - Will generative AI revenue projections 2026 translate into enterprise ROI automatically?

Not automatically. Vendor revenue can rise even when enterprise value is uneven, because ROI depends on workflow redesign, governance, and sustained usage. - What machine learning market trends 2026 matter most for non-technical leaders?

Operational reliability and monitoring. If ML systems drift without detection, performance degrades quietly and trust erodes. - How can I estimate AI adoption rates in US enterprises 2026 for my board deck?

Show an adoption ladder (explore, pilot, early scale, scale) and place your company on it with evidence like active usage and production workflows. - What drives AI spending forecast in the United States 2026 more than model choice?

Governance requirements, integration costs, and usage-based pricing dynamics often drive the bill more than the specific model brand. - Which industries will likely lead sector-wise AI growth forecast 2026 in the U.S.?

Many expect strong activity in finance, healthcare operations, retail service automation, and manufacturing quality, but the pace varies with compliance and data readiness.

If you are building a 2026 plan and want a more defensible way to connect trend headlines to real budgets, it may help to map your use-case portfolio, governance needs, and vendor options into a simple scenario model you can update quarterly.