AI ethics and governance framework is how US organizations turn “responsible AI” from a slogan into roles, controls, and decisions people can actually follow.

If you feel stuck between shipping fast and avoiding regulatory, reputational, and security surprises, you’re not alone, most teams don’t fail because they don’t care, they fail because accountability stays fuzzy, documentation is thin, and escalation paths don’t exist until something breaks.

This guide focuses on practical governance mechanics: who owns what, what to document, which checkpoints matter across the AI model lifecycle, and how to connect risk management with audits and transparency expectations.

What an AI ethics and governance framework really includes (and what it doesn’t)

In many companies, “AI governance” becomes a policy PDF that nobody reads, or a review board that meets only after a launch goes sideways. A workable setup looks more like a lightweight operating system for AI decisions.

Core components you typically need:

- ethical AI governance model that defines principles in plain language, plus how those principles translate into approvals and constraints

- responsible AI oversight policy that sets scope: which systems count as AI, which vendors count, which uses are prohibited or require heightened review

- AI accountability structure with named owners for risk acceptance, not just “the team”

- AI risk management guidelines aligned to enterprise risk, privacy, security, and legal review workflows

- algorithmic transparency standards that fit your reality, external disclosures where needed, internal explainability and traceability everywhere

- AI compliance and audit process so evidence exists before an incident or regulator request

What it often does not include is a single one-size-fits-all checklist. A fraud model, an HR screening tool, and a customer support chatbot can’t share identical controls, the risk profile differs too much.

Why US organizations struggle: the failure points that show up repeatedly

Most governance gaps come from normal incentives: product teams want speed, legal teams want certainty, security teams want control, and leadership wants ROI. Without a shared structure, those incentives collide.

- Ambiguous ownership: nobody is clearly empowered to say “stop,” or to sign off on residual risk.

- Shadow AI: teams adopt models and tools via credit card spending, then governance discovers it late.

- Vendor opacity: “proprietary model” becomes an excuse for weak documentation and limited audit rights.

- Data drift and behavior drift: performance changes over time, but monitoring and retraining controls don’t exist.

- Bias debates without a method: teams argue values, but don’t agree on fairness metrics, cohorts, or testing cadence.

According to NIST, AI risk management should be ongoing and iterative, not a one-time gate before launch. That framing matters because governance has to live in the lifecycle, not in slide decks.

Quick self-assessment: do you have “governance” or just good intentions?

If you answer “no” to several items below, you likely need more than a policy refresh, you need operational controls.

- We can list all AI systems in production, including vendor AI, with a current owner and business purpose.

- High-impact use cases have a documented risk assessment and a named approver for residual risk.

- We have a consistent fairness and bias mitigation strategy tied to defined cohorts and test thresholds.

- We can explain model inputs, key features or signals, and limitations to internal stakeholders without hand-waving.

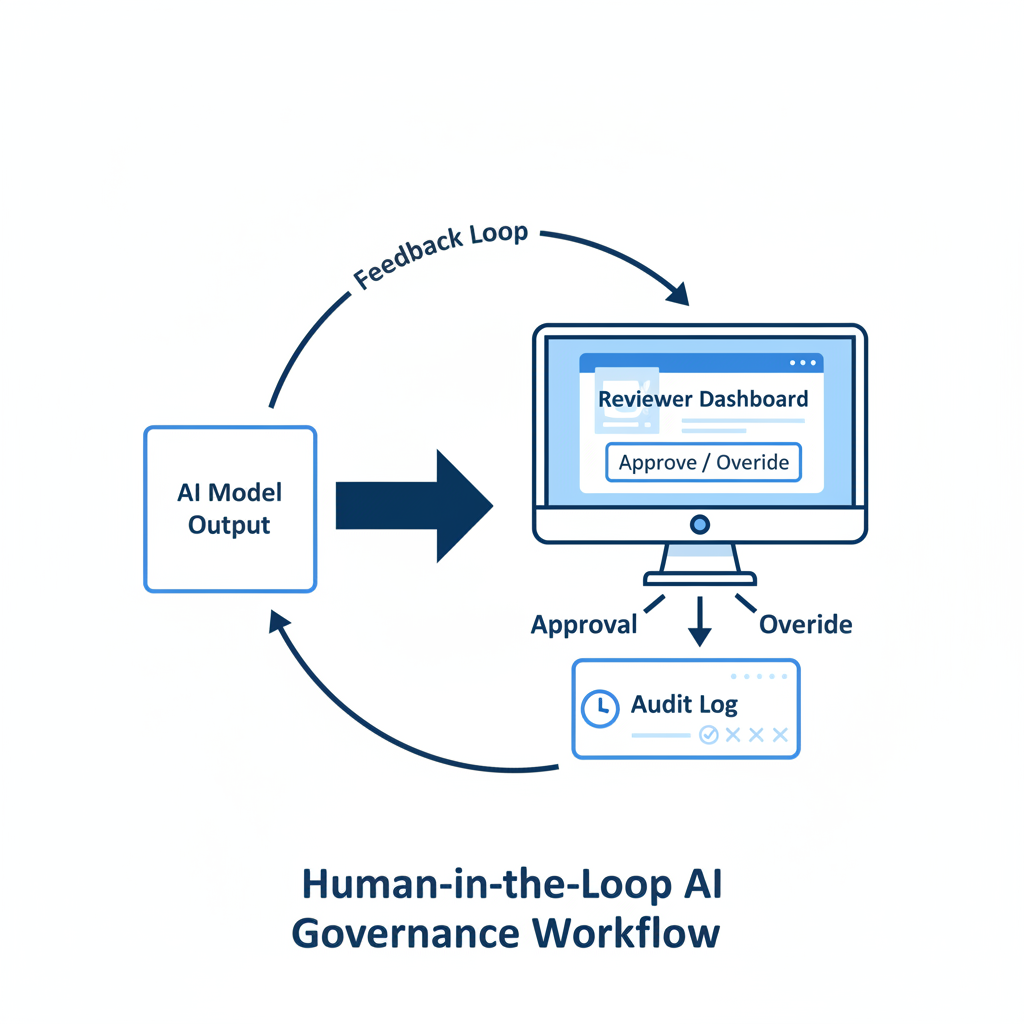

- There is a defined human-in-the-loop governance pattern where automation could harm individuals.

- We track model changes, approvals, and deployment history with evidence that supports audits.

- Incidents have an escalation path, including when to roll back a model or disable a feature.

Key point: You don’t need perfection, you need a defensible process that matches the risk level of the system.

A practical operating model: roles, committees, and decision rights

An AI ethics and governance framework works when decision rights are explicit, especially when tradeoffs happen. In practice, many orgs combine a small central function with distributed product ownership.

Common accountability pattern (adapt as needed)

- Executive sponsor: sets risk appetite, resolves cross-functional conflicts, owns “go/no-go” for highest-risk deployments.

- AI governance lead (or office): maintains standards, intake, templates, and reporting, runs the review cadence.

- Product/business owner: accountable for outcomes, user impact, and operational metrics after launch.

- Model owner (ML lead): accountable for technical performance, monitoring, retraining plan, and documentation.

- Legal/privacy/security: define mandatory controls, review DPIAs where applicable, and set vendor requirements.

- Internal audit: validates the AI compliance and audit process and evidence integrity.

What the review board should do (and not do)

A review board should decide on risk classification, required controls, and exception approvals. It should not re-litigate product strategy every meeting or become a bottleneck for low-risk systems.

Lifecycle controls that make governance real (intake to retirement)

Governance becomes measurable when it maps to the AI model lifecycle. The goal is consistency: the same types of questions get answered every time, with stricter depth for higher-impact uses.

| Lifecycle stage | Required artifacts | Typical controls |

|---|---|---|

| Intake & scoping | Use case brief, impact level, data sources | Prohibited use screening, vendor due diligence triggers |

| Data & training | Data lineage, consent/rights notes, labeling guidance | Privacy review, security classification, bias checks on datasets |

| Evaluation | Test plan, model card or equivalent, limitations | Fairness tests, robustness tests, red teaming for misuse |

| Deployment | Release notes, change log, monitoring plan | Approval gate, rollback plan, access controls, logging |

| Operations | Monitoring reports, incident tickets, retraining records | Drift monitoring, periodic reviews, user feedback loop |

| Retirement | Decommission plan, data retention notes | Access removal, archiving evidence for audits |

According to ISO/IEC, governance and risk management benefit from defined controls and continual improvement, which is another way of saying: build feedback loops, don’t treat launch as the finish line.

From principles to practice: transparency, fairness, and human oversight

Teams often overpromise “explainability” and underdeliver “traceability.” For most enterprises, it’s more useful to define what must be knowable and provable, even when the model is complex.

Algorithmic transparency standards you can actually implement

- Internal transparency: document training data sources, feature categories, evaluation approach, and known failure modes.

- User-facing transparency: disclose AI involvement where it affects decisions or content, and provide a path for questions or appeals when appropriate.

- Traceability: keep versioning for prompts, policies, models, and datasets so outcomes can be reconstructed.

Fairness and bias mitigation strategy: make it testable

Fairness isn’t one metric, and it’s rarely “solved.” Pick a method, document why, then test it regularly.

- Define which protected or sensitive attributes you can measure, and what proxies are off-limits.

- Choose evaluation cohorts that reflect real users, not just clean benchmark datasets.

- Set thresholds that trigger action, and be honest about tradeoffs with accuracy and cost.

Human-in-the-loop governance: where it matters most

Human review adds cost, so apply it where harm can be high: eligibility decisions, adverse actions, medical or safety-related guidance, financial approvals, and anything that can meaningfully affect rights or access. For these cases, define reviewer training, queue design, and override authority, not just “a human checks it.”

Build your enterprise AI governance roadmap (90 days to steady state)

An enterprise AI governance roadmap works when it starts small, proves value, then expands coverage. Big-bang governance programs often stall because nobody can staff them.

Days 0–30: establish minimum viable governance

- Create an AI system inventory, include vendors and “embedded AI” features in tools.

- Define risk tiers and required review depth per tier.

- Publish a short responsible AI oversight policy: scope, prohibited uses, and escalation path.

Days 31–60: standardize artifacts and controls

- Roll out templates: intake form, model card, evaluation plan, monitoring plan.

- Implement AI model lifecycle controls in your delivery process: change management, approvals, logging.

- Set minimum AI risk management guidelines for data handling, security testing, and privacy review.

Days 61–90: operationalize audit readiness

- Define the AI compliance and audit process: evidence storage, sampling, review cadence.

- Run a tabletop incident exercise for one high-impact system, tune escalation and rollback steps.

- Start reporting: inventory coverage, open risks, exceptions, and monitoring health.

According to the U.S. Equal Employment Opportunity Commission (EEOC), employers should consider how algorithmic tools may create disparate impact in hiring and employment contexts. Even outside HR, the lesson translates: measure impact, don’t assume neutrality.

Common mistakes that waste time (and increase risk)

- Copy-pasting a framework without tailoring: it reads well, but doesn’t match your data, tools, or approval reality.

- Only focusing on model performance: accuracy can improve while risk gets worse, especially with privacy leakage or misuse.

- Governance that ignores vendors: third-party models still need accountability, contract rights, and monitoring expectations.

- One-time bias testing: fairness checks at launch don’t catch drift, user mix changes, or new edge cases.

- No exception process: teams will bypass governance if there’s no legitimate way to request a variance with documented sign-off.

Key takeaway: governance must be easier than going around it, otherwise it becomes theater.

When you should bring in experts (and what to ask them)

Some situations merit specialized help, not because your team is weak, but because the stakes or complexity rises quickly. In these cases, consider consulting legal counsel, privacy professionals, security specialists, or independent auditors, depending on the risk.

- You’re deploying AI in regulated contexts (finance, healthcare, employment, education, critical infrastructure).

- You can’t get enough transparency from a vendor to meet internal standards.

- You’ve had a material incident, or you expect external scrutiny and need an evidence-backed response.

- You need to design appeals, adverse action notices, or user disclosures, where wording and process details matter.

According to the Federal Trade Commission (FTC), companies should be careful about misleading claims related to AI and should consider fairness and transparency. If you’re unsure whether your disclosures or practices could be seen as deceptive or unfair, getting advice early can be cheaper than cleaning up later.

Conclusion: make governance boring, repeatable, and provable

An AI ethics and governance framework pays off when it reduces uncertainty for builders and reviewers at the same time, teams know what evidence to produce, leaders know what risks they’re accepting, and audits stop being fire drills.

If you want one next step that actually moves things forward, start with an inventory and a risk-tiering scheme, then attach lifecycle checkpoints to each tier. Everything else becomes easier once the organization agrees on “what we have” and “how risky it is.”