Artificial intelligence applications show up in U.S. businesses less as “big futuristic bets” and more as small, repeatable wins: fewer manual handoffs, faster decisions, and customer experiences that feel less clunky.

If you’re evaluating AI right now, the hard part usually isn’t whether AI works, it’s choosing use cases that fit your data, your risk tolerance, and your team’s ability to maintain the system after the demo ends.

This guide breaks down 12 practical ways companies are using AI today, grouped into common functions like customer support, marketing, operations, cybersecurity, and regulated industries. You’ll also get a quick self-check, a simple prioritization method, and a few “don’t do this first” warnings that save time.

Where AI delivers value (and why some projects stall)

In real operations, AI tends to earn its keep in three patterns: automation, prediction, and content generation. When an initiative stalls, it’s often because the use case picked doesn’t match the data quality, compliance constraints, or workflow ownership.

- Automation: Reduces repetitive work, speeds up response times, standardizes outcomes. Think AI customer service automation, invoice triage, or ticket routing.

- Prediction: Improves planning, forecasting, and risk detection. This is where many machine learning use cases live, like churn prediction or demand forecasting.

- Generation: Produces drafts of text, images, code, or summaries. Many generative ai use cases fit here, but the guardrails matter.

According to NIST, trustworthy AI includes considerations like safety, security, and transparency. In practice, that means you plan for monitoring, audit trails, and human review where mistakes cost real money or trust.

12 practical artificial intelligence applications U.S. businesses use today

Not every company needs all 12. The point is to recognize patterns, then pick the few that align with your goals, your systems, and what you can support quarter after quarter.

1) AI customer service automation (chat, email, and call support)

This is one of the fastest “time-to-value” plays: AI drafts replies, summarizes past interactions, and suggests next steps so agents handle more tickets without sacrificing tone.

- Best for: high volume support, repeat questions, knowledge-base heavy products

- Watch-outs: outdated policies in the knowledge base, hallucinated answers, brand voice drift

2) Ticket routing and workforce triage

Even without a customer-facing bot, AI can classify tickets by urgency, topic, and customer tier, then route them to the right queue. It’s a quiet productivity gain that most teams actually keep.

3) Marketing automation: personalization and creative iteration

In ai in marketing automation, AI helps segment audiences, propose subject lines, generate ad variations, and summarize campaign performance. The practical win is speed: more tests, tighter feedback loops.

Many teams do better when they set rules like “AI drafts, humans approve,” especially for regulated claims or sensitive topics.

4) Sales enablement: call summaries, next-step suggestions, and CRM hygiene

AI can summarize discovery calls, pull out objections, and draft follow-up emails. It also helps with CRM completeness, which sounds boring until you realize forecasts depend on it.

5) Finance: anomaly detection and smarter reconciliation

AI in finance often starts with anomaly detection: unusual transactions, duplicate invoices, out-of-pattern spend. These are classic machine learning use cases because the model learns “normal,” then flags exceptions.

- Best for: AP/AR operations, procurement, expense auditing

- Watch-outs: false positives that create new busywork, unclear ownership for investigations

6) Cybersecurity: phishing detection and alert prioritization

AI in cybersecurity can help identify suspicious logins, detect phishing patterns, and reduce alert fatigue by prioritizing what matters. According to CISA, organizations should treat AI as part of broader cyber risk management, not a replacement for fundamentals like patching and access controls.

7) Retail: demand forecasting, merchandising, and fraud prevention

AI in retail shows up in forecasting store demand, optimizing promotions, and spotting return fraud. Even a modest improvement in forecasting can reduce stockouts and overstock, which is where cash quietly disappears.

8) Manufacturing: predictive maintenance and quality inspection

AI in manufacturing is strongest when you already collect machine sensor data or have consistent inspection images. Predictive maintenance flags components likely to fail; computer vision catches defects earlier than manual checks in many lines.

- Best for: high-throughput lines, expensive downtime, repeatable visual defects

- Watch-outs: inconsistent camera setup, changing lighting, limited labeled defect data

9) Healthcare: documentation support and imaging assistance

AI in healthcare commonly supports clinical documentation, scheduling, and imaging assistance. It can reduce admin burden, but clinical use requires careful validation, privacy controls, and oversight; many situations call for consultation with compliance and medical professionals.

According to FDA, some AI-enabled software may be regulated as a medical device depending on intended use, so it’s worth checking early if you operate in or sell into clinical settings.

10) Education and training: tutoring, assessment support, and content drafts

AI in education often means personalized practice, automated feedback drafts, and internal employee training content. The key is keeping humans in the loop for grading fairness, sensitive topics, and alignment with curriculum requirements.

11) Operations: document processing and workflow extraction

Think contracts, onboarding packets, compliance forms, and vendor docs. AI can extract fields, summarize clauses, and trigger workflow steps. This tends to be a durable artificial intelligence application because the value stays even when you change vendors or systems.

12) Software and IT: code assistance and incident response summaries

AI can draft code suggestions, write unit test outlines, and summarize incident timelines. Used well, it frees engineers for higher-level work; used poorly, it can introduce subtle bugs, so peer review and testing remain non-negotiable.

Quick self-check: which use cases fit your company right now?

If you’re trying to pick a starting point, don’t start with the “coolest” demo. Start with the use case that meets at least a few of these criteria.

- Clear owner: one team owns the workflow end-to-end, not “everyone and no one.”

- Measurable baseline: you already track time-to-resolution, cost per ticket, scrap rate, or similar.

- Reasonable data access: the data exists in systems you can legally and securely use.

- Low blast radius: if the AI makes a mistake, a human can catch it before harm or compliance risk.

- Repeatable volume: enough throughput to justify automation or prediction.

If you answered “no” to most bullets, that’s not a failure, it just means your first project should probably be internal, narrow, and heavily supervised.

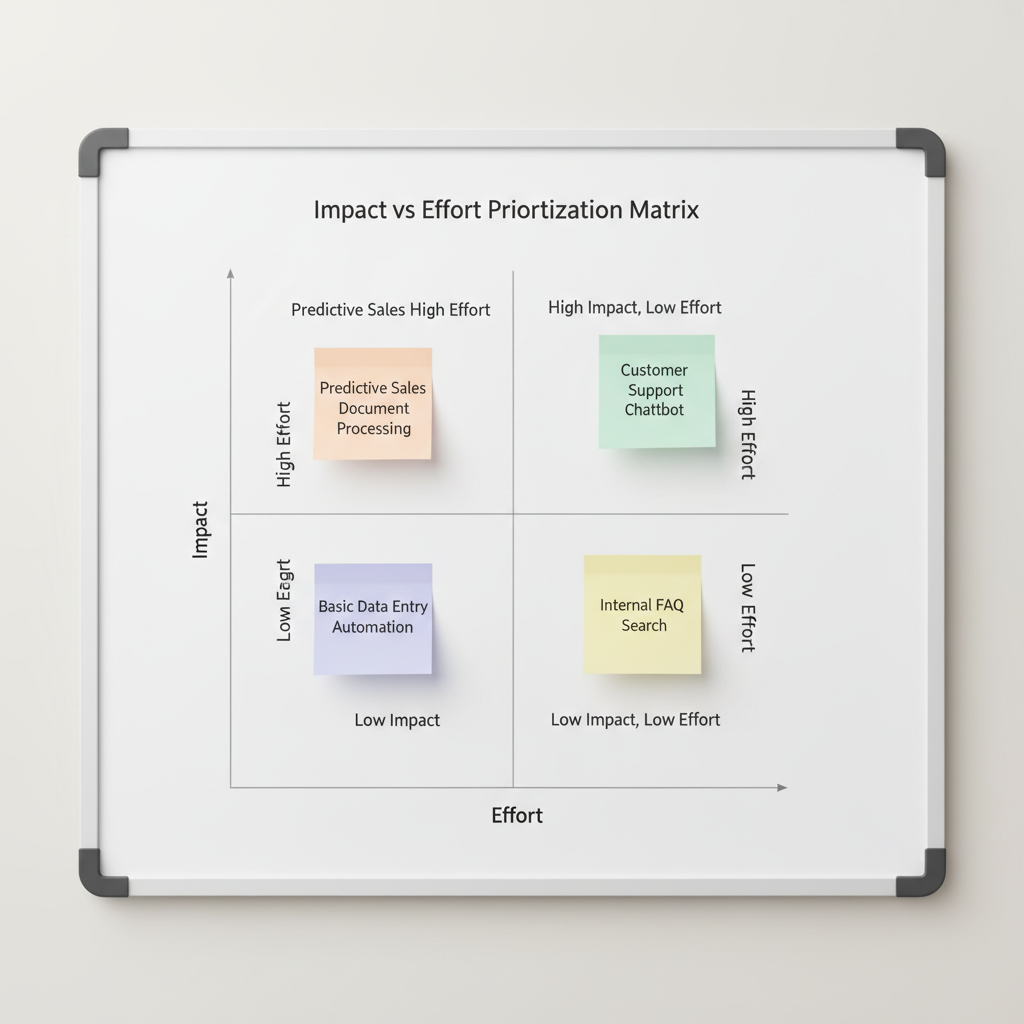

A practical way to prioritize: the 4-box scorecard

Here’s a simple scorecard teams use to compare artificial intelligence applications without overthinking it. Give each category a 1–5 score, then discuss the gaps.

| Category | What to ask | Example signal |

|---|---|---|

| Business impact | What changes if this works? | Lower support cost, fewer chargebacks, less downtime |

| Data readiness | Do we have usable, consistent data? | Clean tickets, labeled defects, stable product taxonomy |

| Risk & compliance | What’s the downside if AI is wrong? | Customer trust, regulatory exposure, safety risk |

| Adoption effort | Will teams actually use it daily? | Fits current tools, minimal workflow disruption |

Key point: the “best” idea often loses to the “easiest to operationalize.” That’s normal, and it’s usually the right call early on.

Implementation playbook: from pilot to production without regret

Many AI projects die in the handoff between a pilot and real operations. The fix is mostly process, not magic.

- Define success before you build: one metric, one baseline, one target time window.

- Choose the right pattern: automate, predict, or generate, then pick tooling that matches.

- Design the human review loop: who approves outputs, and when?

- Start with a constrained scope: one channel, one region, one product line.

- Set monitoring early: quality drift, latency, cost per request, escalation rate.

- Document decisions: prompts, model versions, data sources, and exceptions.

According to ISO/IEC guidance on AI governance, controls and accountability matter as systems scale. Even if you’re not “doing formal governance,” basic documentation keeps you from relearning the same lesson every quarter.

Common mistakes (the ones that waste the most time)

- Buying a tool before you pick a workflow: a platform can’t rescue an unclear process.

- Assuming more data automatically helps: messy data can make models confidently wrong.

- Skipping change management: if frontline teams don’t trust it, adoption stalls.

- No policy for sensitive data: decide what can and cannot go into prompts and logs.

- Forgetting maintenance: models and prompts drift as products, customers, and policies change.

When to involve specialists (and why it’s worth it)

Some artificial intelligence applications are “safe to try” with a small team. Others deserve expert support early, because the risk isn’t theoretical.

- Regulated environments: healthcare, finance, education, or anything with strict privacy rules, bring in compliance and legal review.

- Security-sensitive deployments: if AI touches auth, access, or threat response, consult security leadership.

- Clinical or safety impact: for ai in healthcare or safety-critical manufacturing, involve qualified professionals and validate carefully.

- High-stakes customer decisions: credit, pricing, eligibility, or employment-related workflows may require deeper fairness and audit considerations.

If you’re unsure where your use case lands, a short risk review often saves you from building something you later have to pull back.

Conclusion: pick two use cases, prove value, then scale

The healthiest way to approach AI is boring on purpose: choose one or two artificial intelligence applications with clear owners and measurable baselines, run a tight pilot, and only then expand. Once you build the habit of monitoring, review, and documentation, adding more machine learning use cases or generative ai use cases gets dramatically easier.

Action steps: pick one workflow that already has volume and a clear metric, then write a one-page plan covering data access, human review, and how you’ll measure success in 30 days.

Key takeaways

- Automation, prediction, and generation are the three practical buckets that cover most business AI.

- Data readiness and workflow ownership matter as much as model quality.

- Start low-risk, operationalize monitoring, then scale into higher-stakes domains.